13.11.19

Autonomous weapons: targeting people should be prohibited

By Richard Moyes

Autonomous weapons systems (AWS) raise fundamental ethical concerns. Especially the direct targeting of human beings has been widely criticized as inhumane and an affront to human dignity.

On the occasion of the Meeting of High Contracting Parties to the Convention on Certain Conventional Weapons (CCW) which takes place in Geneva this week, our most recent policy note:

- identifies a range of concerns raised by the targeting of people through AWS

- argues for a prohibition on the targeting of people through AWS

- stresses that an anti-personnel prohibition is not sufficient to address the wide range of urgent concerns raised by AWS

The states parties to the CCW will decide at this meeting on how to take their deliberations on ‘Lethal Autonomous Weapons Systems‘ forward next year. In light of the pressing concerns raised by AWS, the ethically appropriate course of action is to elaborate new rules and social and institutional practices to prevent moral and legal wrongs.

Support is growing among states parties for the negotiation of some sort of regulatory instrument, though considerable disagreement and ambiguity persists regarding its objective, form, scope and content. Article 36, a founding member of the Campaign to Stop Killer Robots, supports the call for a legally binding instrument that combines prohibitions – including an anti-personnel prohibition – with positive obligations aimed at ensuring human beings retain meaningful control over the use of force.

A ban on AWS enjoys strong public support. A recent opinion poll carried out in ten European countries shows that 73% of those polled want their governments “to work towards an international ban on lethal autonomous weapons systems.” It is urgent that states parties to the CCW adopt a mandate this week that is responsive to expressions of the public conscience and paves the way for a strong international legal instrument.

Read the policy note:

Policy note

November 2019

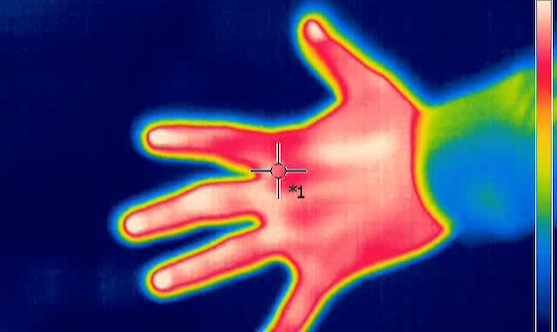

Featured image: Infrared thermography image of a hand (Jarek Tuszyński / CC-BY-SA-3.0)